Quick Answer

Prompt engineering is not dead, but it is no longer enough on its own. In 2026, high-performing AI products are built using a combination of fine-tuning, in-context learning, and smart prompting. Each approach solves a different problem. Knowing which to use, and when, is now the most important skill for anyone building seriously with AI.

The Debate That Will Not Go Away

A few years ago, writing a good prompt felt like the secret weapon. Teams were hiring prompt engineers. Courses were selling fast. And people were discovering that a few carefully chosen words could push a model from mediocre to genuinely useful. Then the goalposts moved. Models became more capable. The tricks that used to matter started to matter less. A new argument appeared across developer forums, AI research blogs, and startup pitch decks: prompt engineering is dying. Fine-tuning and in-context learning are the future. That framing is too simple. The teams doing the most sophisticated work with AI in 2026 are not picking one technique and abandoning the others. They are making sharper decisions about which tool fits which situation. This article breaks down what each approach actually is, where each one works well, and where each one falls short. The goal is a clear answer to a question that matters right now: which combination of these three techniques produces the most capable and cost-effective AI system for your specific use case.

What Prompt Engineering Actually Does and Where It Breaks Down

Prompt engineering is the practice of designing the text input to a model in a way that produces the best possible output. That includes the system prompt and the structure of individual user queries. Done well, it is genuinely powerful. Chain-of-thought prompting, where the model is asked to reason step by step before answering, has been shown across multiple studies to significantly improve accuracy on complex tasks. Few-shot prompting, where examples are included in the input to show the model what good output looks like, sharpens tone, format, and precision without any additional training. The limits, though, are real. A prompt that performs well in testing can behave inconsistently when real users phrase things differently. A cleverly written prompt cannot teach a model facts it was never trained on. And at production scale, a long and elaborate system prompt adds token cost to every single request, which compounds fast across thousands of daily interactions. Prompt engineering is fast, flexible, and cheap to start. But as a system grows in volume or complexity, it runs into a ceiling. That ceiling is exactly where fine-tuning and in-context learning become necessary.

Fine-Tuning in 2026 Is Not the Expensive Process It Used to Be

The old picture of fine-tuning was intimidating. Large GPU clusters. Months of compute time. Specialist engineering teams. Most organizations could not justify the cost, and many did not bother trying. That picture is outdated. Parameter-efficient fine-tuning methods, particularly a technique called LoRA which stands for Low-Rank Adaptation, have changed the economics completely. LoRA updates only a small fraction of the model parameters rather than the entire network. A 2024 report from Hugging Face found that LoRA-based fine-tuning reduces compute requirements by over 90 percent compared to full fine-tuning, with results that are often indistinguishable in quality. What fine-tuning actually delivers is three things that prompting alone cannot produce reliably. Consistency. A fine-tuned model does not need a long system prompt reminding it how to behave in every session. The behavior is embedded in the model weights and stays stable across varied user inputs.

- Domain expertise. A model trained on a specific set of documents, a professional domain, or a narrow task will consistently outperform a general-purpose model on that domain, even when the general model is given the best possible prompt.

- Cost efficiency at scale. Smaller fine-tuned models often match or exceed the performance of larger general models on specific tasks at a fraction of the inference cost. Over millions of requests, that gap becomes very significant.

- The tradeoff is inflexibility. A fine-tuned model is optimized for its training task. If requirements change substantially, retraining is needed. This is why fine-tuning works best when the desired behavior is clearly defined and stable before the training run begins.

In-Context Learning Is the Technique Most Teams Underuse

In-context learning sits between prompt engineering and fine-tuning in a way that many teams overlook entirely. The idea is that modern language models adapt their behavior based on examples or information shown to them within the context window, without any changes to the underlying model weights. This goes beyond basic few-shot examples. Advanced in-context learning means dynamically retrieving relevant material, past conversations, domain-specific documents, or updated knowledge, and inserting it into the context at the moment of each request. Retrieval-augmented generation, commonly called RAG, is the most widely adopted implementation of this idea. Stanford researchers studying in-context learning across multiple frontier models in 2024 found that well-designed dynamic context injection closed more than 80 percent of the performance gap between a general model and a domain-specific fine-tuned model, at a much lower implementation cost. That result is significant for teams that need domain-aware behavior but are not yet ready to commit to a full fine-tuning pipeline. The practical strength of in-context learning is real-time flexibility. The context updates with every request. A model can be given current product information, a specific user history, or a document published today, and it adapts immediately. Fine-tuning cannot match that kind of knowledge currency. Prompting alone cannot scale it reliably at volume. In-context learning handles it well.

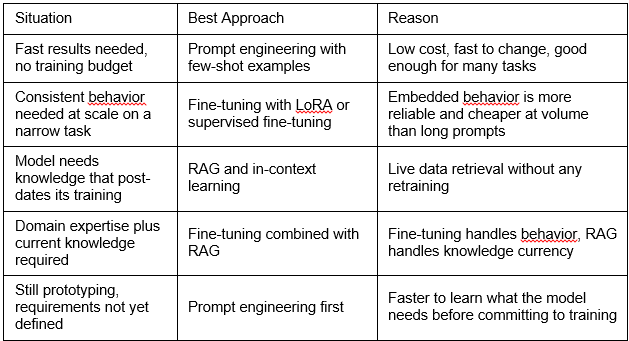

How to Decide Which Approach to Use

The most practical contribution to this debate is not an argument for one technique over the others. It is a clear framework for making the decision based on what the system actually needs to do.

Choosing the Right Technique for the Right Job

The most common mistake teams make is fine-tuning too early. They spend real time and money before they clearly understand what behavior they are trying to produce. Prompting and in-context learning are better starting points because they are cheap to test and fast to change. The second common mistake is over-relying on prompting for tasks that genuinely require fine-tuning. Trying to maintain consistent tone, specialized formatting, or narrow domain behavior purely through prompts is a losing battle at scale. Once the requirements are clear and stable, fine-tuning is the cleaner and cheaper long-term answer.

A Practical Decision Checklist

These six questions point toward the right answer faster than any theoretical comparison between the techniques.

- Is the required behavior narrow and well-defined? If yes, fine-tuning is likely the right answer. If the scope is broad or still evolving, start with prompting.

- Does the model need knowledge from after its training cutoff? If yes, RAG or dynamic context injection is mandatory. No amount of prompting or fine-tuning solves a knowledge gap.

- How many requests will this system handle per month? At low volume, a longer system prompt is affordable. At high volume, the cost difference between a fine-tuned model and a prompted general model adds up quickly.

- Is consistency more important than flexibility? Fine-tuned models behave consistently. General models given prompts can be influenced by unusual user phrasing. If consistency is critical, fine-tuning reduces that variance.

- How quickly do requirements change? Fine-tuning locks behavior into the model weights. If the use case is evolving fast, prompting and in-context learning are much faster to update.

- Has the desired behavior been validated before training? Fine-tuning the wrong behavior into a model is costly to undo. Validate outputs clearly before committing to a training run.

The Final Answer: Prompt Engineering Evolved. It Did Not Die.

The claim that prompt engineering is dead makes for a strong headline. As a description of what is actually happening in 2026, it does not hold up. What is true is that prompting alone is not enough for serious AI applications anymore. The teams building the most capable and cost-effective AI products are using all three approaches together. Prompting handles flexibility. Fine-tuning handles consistency and scale. In-context learning handles real-time knowledge and domain adaptation. None of these techniques makes the others obsolete. Each one fills a gap the others cannot. The skill that matters most in 2026 is not mastering any single technique. It is knowing which one to reach for in a given situation, and understanding the tradeoffs well enough to make that call with confidence. Prompt engineering grew up. It became one tool in a larger toolkit rather than the whole toolkit. That is not a loss. That is what the field maturing actually looks like.

Your Next Step

Pick one active project where model behavior feels unreliable or infrastructure cost feels too high. Run it through the six-question checklist above. The answers will almost always point clearly toward whether the system needs better prompts, a fine-tuning run, or a retrieval layer added. That is the fastest path from the debate to a working solution.